Photographic Visualization of Weather Forecasts

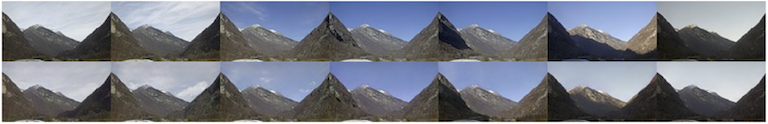

Outdoor webcam images visualize many aspects of the past and present weather. As they are also easy to interpret, they are consulted by meteorologists and the general public alike. Weather forecasts, in contrast, are communicated as text, pictograms or charts, each focusing on separate aspects of the future weather. We therefore introduce a method that uses photographic images to also visualize weather forecasts.

This is challenging, because photographic visualizations of weather forecasts should look real, be free of obvious artifacts, and should match the predicted weather conditions. The transition from observation to forecast should be seamless, and there should be visual continuity between images for consecutive lead times. We use conditional Generative Adversarial Networks to synthesize such visualizations. The generator network, conditioned on the analysis and the forecasting state of the numerical weather prediction (NWP) model, transforms the present camera image into the future. The discriminator network judges whether a given image is the real image of the future, or whether it has been synthesized. Training the two networks against each other results in a visualization method that scores well on all four evaluation criteria.

AIES 2023 paper | GitHub repository | Zenodo upload

Colloquium in Climatology, Climate Impact and Remote Sensing 2022 presentation

RSI TV segment “Il Quotidiano” (14.02.24)

Probabilistic Plausibility of Surface Data

The quality control (QC) of MeteoSwiss surface data occurs at several steps along the data processing chain: in the instrument itself, in the logger, during import into the data warehouse, and hours, days and even years later. The early tests act in real-time, but are very limited in scope. They can only find implausible measurements if they are physically impossible. Later tests are more sophisticated and often involve measurements from other instruments and sites. They find more implausible measurements, but also generate false positives.

It is a challenge to combine the quality information (QI) from all independent QC systems. We propose to use the probabilistic plausibility of the measurement, represented on a continuous scale between 0 (impossible) and 1 (confirmed by an expert). The probabilistic plausibility is calculated from the a-priori plausibility of the measurement parameter, all available test outcomes and possible expert inspection, following the well-known statistical procedure called Naïve Bayes. Using this procedure, each test outcome influences the probabilistic plausibility according to the strengh of its evidence. And as soon as a new test outcome is available, the plausibility of the measurement can be updated efficiently.

Summarizing all available QI in a single number helps our users to set an appropriate threshold on the minimum data quality that they need in their application.

EUMETNET STAC WG AQC 2019 presentation

EUMETNET DMW 2017 presentation

Camera Based Visibility Estimation

MeteoSwiss operates a network of panorama cameras positioned along Switzerland’s main flight paths. One purpose of this network is to support the meteorologist in creating general aviation forecasts (GAFORs), which predict the relevant weather conditions for visual flight. The GAFOR is a summary of expected prevailing visibility and cloud base height along the flight path.

Visibility is currently estimated from visual observations and measured using transmissometers. The latter provide automated but local measurements of the atmosphere at the measurement site.

We are engaged in an effort to increase the spatial and temporal availability of the visibility parameter. The benefit of using cameras to estimate visibility is two-fold. First, we reuse the already existing camera network, instead of procuring and operating additional instruments. Second, panorama cameras observe more of the atmosphere than transmissometers, leading to more representative measurements for weather situations where the atmosphere is significantly non-homogeneous.

MET Alliance ET AUTO OBS 2020 presentation

Learning Dictionaries with Bounded Coherence

Over-complete dictionaries can achieve a lower approximation error than orthogonal dictionaries in sparse coding applications. On the one hand, increasing the number of dictionary atoms leads to sparser solutions. On the other hand, a dictionary with low self-coherence has several advantages, such as a more rapid decay of the residual error. We propose a dictionary learing algorithm that can achieve any trade-off between both objectives.

IEEE SPL 2012 paper | Further material

Speech Enhancement with Sparse Coding in Learned Dictionaries

Speech enhancement is difficult if the target and interferer sources are partially coherent, such as for speech in the presence of babble noise. We propose a sparse coding algorithm (called LARC) for training dictionaries and enhancing speech in the presence of challenging non-stationary interferers.

IEEE Trans ASLP 2012 paper | Further material | Matlab toolbox larc-0.1.zip

ICASSP 2010 paper | Further material

EM for Sparse and Non-Negative PCA

Classical principal component analysis produces full projection vectors with mixed signs. For some applications, sparse and/or non-negative solutions are more appropriate. We propose an algorithm that is efficient for large and high-dimensional datasets and can handle the case where the number of features exceeds the number of observations.

ICML 2008 paper | Matlab toolbox emPCA-0.4.zip | R package

Since the publication of the ICML paper, the method has been substantially expanded, and a mature implementation is available as an R package. I also wrote up an example from the domain of portfolio optimization, and compared different methods on a gene expression data set.

Non-Negative CCA for Audio-Visual Source Separation

We apply canonical correlation analysis to the task of audio-visual source separation. By enforcing the proper constraints on the audio and video projection vectors, we are able to identify sources in video and acoustically separate them with the help of a microphone array.

MLSP 2007 paper | NIPS 2006 workshop paper | R package

The algorithm presented in the MLSP paper has also been substantially expanded into a general purpose R package for sparse and non-negative canoncial correlation analysis. A demonstration is given in this blog post.